A Practical Guide to Explainable AI

A post that breaks AI Explainability into understandable and actionable pieces

I’ve had a sudden realization recently. Apparently, AI now penetrates most of my everyday life and is always around somewhere: my search is primarily facilitated by AI, even random questions about the world I sometimes get, my trading is fully AI-driven, my car is equipped is not-so-fancy but assistive AI tools, my work involves AI for everyday tasks, my writing is validated and often supported by AI, my social media attention is fully modulated by AI, my music taste fully collapsed into local minima with AI-driven recommendations, my insurance profile involved particular form of AI assessing risks, the list goes on.

While AI is involved in many aspects of my life, there are various degrees of justification for the impact it makes on my life and those around me. While I’m perfectly fine with my car telling me to keep within my lane, after all, I can clearly see I’m weaving off my lane once I get my attention back on track; when ChatGPT tells me to take over-the-counter medication, I am not immediately equipped to validate whether its rationale makes sense, even if it sounds quite convincing.

In the early days of LLMs picking up popularity, I recall someone sending me a reel where a doctor shared the mind-blowing use of LLM, generating medical reports with very convincing medical language. That case hit a few warning bells for two reasons. First, my friend was genuinely impressed with that case, but here’s the problem: the LLM was providing medical records for a patient, yet my friend lacked medical education. For all he knew, the LLM could be throwing gibberish medical terms, yet it sounded plausible and convincing to the non-expert. The second reason is much more subtle. Even if the system was writing a completely plausible medical report, the question remained open: how was it doing that, and whether it could articulate its reasoning accurately? The two are very different questions and may have contradicting answers.

Allow me to muddy it even further with this bold statement - all LLM explanations are not even wrong. LLMs model linguistic patterns, and we're yet to invent a model that reasons from first principles. While you can certainly ask to explain the answer (and in many cases, it’d improve the accuracy), the rationale is typically still justification to fit a preexisting conclusion, not deliberate grounding in reality. That’s because LLMs are also trained on conclusions of papers, but who’s to guarantee the training data includes full details of how the conclusion was arrived at, let alone whether it was a correct one? By design, LLMs are not trained to “think” from first principles, nor can they question their parameters.

It got muddy enough at this point. For the rest of this post, I’ll break down the topic of explainability into a spectrum rather than a single, monotonous abstract concept. We’ll build an XAI vocabulary that'll enable you to reason about AI solutions tailored to different needs and requirements. It’ll set the ground for conversation between ML engineers, product managers, technical leads, executives, and policymakers. Imagine all these experts in the same meeting room, talking about explainable AI. This is probably the article to send in advance so they could speak the same language.

XAI vocabulary

First things first, let’s align on a few definitions:

Explainable AI refers to models’ output that makes the inference of the AI system understandable to humans. There are many methods and techniques to achieve this, and they all differ in terms of the quality of explanation and faithfulness.

Faithfulness measures how accurately an explanation represents the true reasoning process or mechanism of the underlying AI model.

Fidelity measures how well the explanation matches the behavior or prediction of the AI model. Some explainability methods require building an intermediate model, referred to as an explanatory model. Thus, fidelity is a crucial metric for measuring the quality of explanations.

It is worth noting that while fidelity and faithfulness are sometimes used interchangeably, they have distinct meanings. Faithfulness asks: “Does this explanation truly reflect how the model works internally?”. Fidelity asks: “Does this explanation (or explanatory model) produce similar outputs to the original model?”. A high-fidelity explanation might perfectly mimic a model’s output behavior without faithfully explaining its internal mechanism (low faithfulness). Conversely, a faithful explanation might capture the true mechanism but with some approximation errors in specific predictions (lower fidelity). In practice, both are important to understand and measure.

Interpretability refers to the degree to which a human can understand the cause of a model’s decision, but with a subtle focus on how. While explainability is generally concerned with why a specific prediction was made, the how further enriches our understanding and shifts focus to model internals. We often refer to inherent interpretability when discussing the model’s native ability to provide an interpretable explanation. The question that it tries to answer is, “Can I understand the model’s reasoning process?”. Later, we’ll explore specific examples of explainable and interpretable models, explainable but not interpretable, and neither explainable nor interpretable black box models.

Building a mental model

To successfully capture different levels of interpretability and explainability techniques, we’ll introduce a simple analogy of models using Python functions. After all, functions are like small models, often begging to be explained, especially when bugs (read: bias) creep in, and we need to urgently find a reason for their misbehavior (read: hallucination). You get the point. Python should be a pretty accessible language to understand, even if you’re not too familiar with it. We won’t be too concerned with the technicalities of Python, but anyone who understands English should be able to read simple Python procedures, so it should work pretty well. What’s more interesting is that we can directly apply explainability techniques to our toy models.

Imagine your friends asking you to lend some money. After some time, you realize that some friends tend to never pay off their debt, a classic problem in the lending industry. After considering your options, you decide to build a model that assesses the risk of lending money to a friend. After long hours of analyzing data, you came up with this model that works pretty well based on your history with past lenders:

def predict_friend_risk(years_known, times_borrowed_before, always_paid_back,

current_job_months, amount_requested):

"""Calculates how risky it is to lend money to a friend on a scale of 0-100.

Higher scores mean higher risk (less likely to get paid back).

Formula:

Base score = 50

- Subtract 2 points for each year you've known them (trust factor)

- Add 5 points for each time they've borrowed money before

- Subtract 30 points if they've always paid you back in the past

- Subtract 0.5 points for each month they've been at their current job

- Add 1 point for each $10 requested

"""

score = 50

score -= years_known * 2 # Long-term friends are more trustworthy

score += times_borrowed_before * 5 # Frequent borrowers are risky

score -= 30 if always_paid_back else 0 # Perfect repayment history is good

score -= current_job_months * 0.5 # Job stability reduces risk

score += amount_requested / 10 # Higher amounts are riskier

# Ensure score stays within valid range

return max(0, min(100, score))This model (I’ll be referring to these functions as models from now on) represents a “white box” model and has the following properties:

- It is fully interpretable - you can see exactly how each factor about your friend impacts their risk score.

- The explanation (the source code) is fully faithful; it is exactly how the final score is calculated.

- It is fully explainable - you can easily explain to a friend, “I can’t lend you $500 because that adds 50 points to your risk score.”

While this approach works for most cases, the model still had performance gaps, and some friends still did not pay off their debt, while others began to question your methods of rejection. So, you decide to build another, more sophisticated model:

from friendship_utils import calculate_trust_level, assess_financial_stability

import numpy as np

def predict_friend_risk(years_known, times_borrowed_before, always_paid_back,

current_job_months, amount_requested):

"""Calculates how risky it is to lend money to a friend on a scale of 0-100.

Higher scores mean higher risk (less likely to get paid back).

Combines friendship history, past borrowing behavior, and current financial

indicators to assess the likelihood of repayment.

"""

# Base score

score = 50

# Trust factor is complex and calculated by a separate function

# that considers more nuanced friendship dynamics

score -= calculate_trust_level(years_known, always_paid_back)

# Borrowing history is straightforward

score += times_borrowed_before * 5

# Financial stability is assessed by another function

score -= assess_financial_stability(current_job_months)

# Amount requested has direct and indirect effects

score += amount_requested / 10 # Direct effect

# Interaction effects between factors (this gets complicated)

features = np.array([years_known, times_borrowed_before,

1 if always_paid_back else 0,

current_job_months, amount_requested])

# These weights capture how factors interact with each other

interaction_weights = np.array([

[-0.1, 0.05, -0.2, -0.01, 0.002], # How years_known interacts with others

[0.05, 0.1, -0.3, -0.02, 0.005], # How times_borrowed interacts with others

[-0.2, -0.3, 0, -0.05, -0.01], # How always_paid_back interacts with others

[-0.01, -0.02, -0.05, 0, -0.001], # How job_months interacts with others

[0.002, 0.005, -0.01, -0.001, 0] # How amount interacts with others

])

# Apply the interaction effects

for i in range(5):

for j in range(5):

if i != j:

score += features[i] * features[j] * interaction_weights[i][j]

return max(0, min(100, score))The code becomes longer, but some of the model's properties have also changed.

- Our model is now only partly interpretable; while we can see the source code and follow the logic, the trust and stability calculations are hidden and therefore not interpretable.

- Explainability becomes a challenge. Now you might tell a friend, “Your job instability is a factor,” but you can’t fully explain how it’s calculated. Good luck explaining interaction weights.

Finally, after assessing the performance of the “gray box” model above, you found that someone had a similar problem before, and you can use their model. So, you decide to give it a go:

from friend_risk_ml import FriendRiskPredictor

from friendship_data import get_friend_history

def predict_friend_risk(years_known, times_borrowed_before, always_paid_back,

current_job_months, amount_requested):

"""Calculates how risky it is to lend money to a friend on a scale of 0-100.

Higher scores mean higher risk (less likely to get paid back).

Uses an advanced machine learning model trained on your entire history

of lending money to friends and whether they paid you back.

"""

# Get additional context from your friendship history database

friend_history = get_friend_history()

# Prepare all inputs for the ML model

features = {

'years_known': years_known,

'times_borrowed_before': times_borrowed_before,

'always_paid_back': always_paid_back,

'current_job_months': current_job_months,

'amount_requested': amount_requested,

'day_of_week': 4, # It's Friday - people often borrow before weekends

'month': 11, # November - holiday season approaches

'friend_network_data': friend_history.get_social_graph(),

'previous_excuses': friend_history.get_excuse_patterns()

}

# The actual risk calculation happens inside this black-box predictor

predictor = FriendRiskPredictor()

risk_score = predictor.predict(features)

return risk_scoreThis new model has a drastically different explainability profile:

- Our model is now completely uninterpretable.

- When it comes to explainability, you can only say, “My system says you’re high risk,” without explaining why.

While this “black box” model might offer more accurate results, it clearly lacks explanations, which in turn has social implications: your friends might feel judged by an algorithm they don’t understand.

In both cases, gray and black box, we say we have an explainability gap, a new term we introduce to describe a property of a model that lacks some degree of explanation. Yet, the question remains - what is the ideal degree of explainability? Is the goal to have a completely explainable and interpretable model for predictions? Not necessarily. It all depends on your explainability profile requirements, that is, our ideal target state of the model’s explainability. Thus, the first decision we ought to make is to decide on the explainability profile, then take any model we defined and identify the explainability gap. If it exists, we’ll address it or try a different model.

Now that we have defined mental models, let’s explore different methods to close the explainability gaps for gray and black box models. After this intuition-building exercise for the explainability spectrum, we will map our knowledge to real models, such as linear regressions, random forest-boosted trees, neural networks, and others.

Post-hoc explanations

When models aren’t inherently interpretable or lack the level of explainability we seek, post hoc methods are often employed to provide additional insight that helps understand what opaque models are doing. Well, sort of, there’s a caveat to it, but don’t worry about it for now.

Global explanation methods

Global explanations help us understand the overall behavior of our model across all predictions. They answer questions like “What factors does this model generally consider most important?”

A common example of global explanations is the breakdown of feature importance. Let's try to follow our examples and compute feature importance using something like this:

def calculate_feature_importance_blackbox():

"""

Analyzes which factors our black-box friend risk model considers most important

by systematically varying each feature and measuring impact on predictions.

"""

from friend_risk_ml import FriendRiskPredictor

import numpy as np

predictor = FriendRiskPredictor()

# Create a baseline friend profile

baseline_profile = {

'years_known': 3,

'times_borrowed_before': 1,

'always_paid_back': True,

'current_job_months': 12,

'amount_requested': 100,

'day_of_week': 4,

'month': 11,

'friend_network_data': {},

'previous_excuses': []

}

baseline_score = predictor.predict(baseline_profile)

# Test impact of each feature by varying it

feature_impacts = {}

# Test years known (0 to 10)

scores_years = []

for years in range(11):

profile = baseline_profile.copy()

profile['years_known'] = years

scores_years.append(predictor.predict(profile))

feature_impacts['years_known'] = np.std(scores_years)

# Test borrowing frequency (0 to 5)

scores_borrowed = []

for times in range(6):

profile = baseline_profile.copy()

profile['times_borrowed_before'] = times

scores_borrowed.append(predictor.predict(profile))

feature_impacts['times_borrowed_before'] = np.std(scores_borrowed)

# Test repayment history

profile_bad_history = baseline_profile.copy()

profile_bad_history['always_paid_back'] = False

impact_repayment = abs(predictor.predict(profile_bad_history) - baseline_score)

feature_impacts['always_paid_back'] = impact_repayment

# Test job stability (0 to 24 months)

scores_job = []

for months in range(0, 25, 3):

profile = baseline_profile.copy()

profile['current_job_months'] = months

scores_job.append(predictor.predict(profile))

feature_impacts['current_job_months'] = np.std(scores_job)

# Test loan amount ($50 to $1000)

scores_amount = []

for amount in range(50, 1001, 50):

profile = baseline_profile.copy()

profile['amount_requested'] = amount

scores_amount.append(predictor.predict(profile))

feature_impacts['amount_requested'] = np.std(scores_amount)

# Normalize to get relative importance

total_impact = sum(feature_impacts.values())

importance_scores = {k: v/total_impact for k, v in feature_impacts.items()}

return importance_scores

# Example output showing which features matter most:

# {'always_paid_back': 0.45, 'amount_requested': 0.23, 'current_job_months': 0.18,

# 'years_known': 0.10, 'times_borrowed_before': 0.04}

# This means payment history (45%) and loan amount (23%) are the biggest factorsThe above will work for all our toy models, as well as any other model, since it is model-agnostic. In addition to a score, each model card can be complemented with a breakdown of how each feature affects the model’s behavior, thus offering higher fidelity by measuring the model’s sensitivity to each feature. While we can claim high fidelity, we cannot claim high faithfulness because our explanations are not tied in any way to the model's internal mechanism. In other words, while we can have confidence in feature importance and that outputs behave roughly as explained, the model has no obligations to follow the same logic or claimed feature contribution and may use an entirely different procedure that happens to be close to what we came up with for feature importance.

It’s worth noting that some model architectures provide feature importance by design, thus making them highly interpretable. In this case, the model would score high on faithfulness due to the coupled nature between score calculation and feature importance breakdown. The example we explored here is specifically for post-hoc feature breakdown.

Another method for global explanation is partial dependence analysis. This provides a more granular breakdown by examining how different values of one feature impact the output, often revealing non-linear relationships that are otherwise overlooked by feature importance explanations. For example, we might use something like this to come up with explanation breakdowns for one or all features’ dynamics:

def partial_dependence_analysis(feature_name, feature_range):

"""

Shows how the model's predictions change as we vary one feature

while keeping all others at their typical values.

"""

from friend_risk_ml import FriendRiskPredictor

predictor = FriendRiskPredictor()

# Typical friend profile (median values from our data)

typical_profile = {

'years_known': 4,

'times_borrowed_before': 1,

'always_paid_back': True,

'current_job_months': 18,

'amount_requested': 200,

'day_of_week': 4,

'month': 11,

'friend_network_data': {},

'previous_excuses': []

}

predictions = []

for value in feature_range:

profile = typical_profile.copy()

profile[feature_name] = value

predictions.append(predictor.predict(profile))

return list(zip(feature_range, predictions))

# Example usage:

# borrowing_effect = partial_dependence_analysis('times_borrowed_before', range(0, 6))

# Result: [(0, 25), (1, 35), (2, 48), (3, 65), (4, 78), (5, 85)]

# Shows risk increases non-linearly: first few borrows add little risk,

# but frequent borrowing (3+) becomes much riskierThis method has a similar explainability profile to feature importance, except it adds more detail to the mix, often revealing non-linear relationships and output dynamics across different value scales. Imagine a case where the model penalizes one feature more as its value becomes larger. While the overall importance might average to a low value due to low representation, our partial dependence might reveal that it contributes significantly more as the value starts to exceed a certain threshold. Say as times_borrowed_before drops below 2, the model penalizes the score much more aggressively.

Local explanation methods

Global explanations are great for model cards and building confidence overall, but often, it is not enough. Consider a case where you declined one of your friend's requests and explained that, based on your model, her application is rejected. You explain that the way it usually works is by feeding such and such data points, and you can also share a breakdown of how each data point contributes to the overall score. Your friend then goes, “Okay, I think I follow your system, but what was exactly wrong with my specific case?” The question would stump you unless you have local explanations at hand or perhaps a highly interpretable model from the get-go.

Counterfactuals

To address our lack of a more granular answer, let's employ a counterfactual method in our explanations. The core question that counterfactual explanations answer is, “What’s the smallest change I could make to get a different outcome?” Imagine your friend asks to borrow money, and your model says they’re high-risk. Instead of saying “no,” counterfactual explanations tell them precisely what they’d need to change to become low-risk. Not only are these explanations “local” to their unique case, but they’re highly actionable; perhaps they can work on their creditworthiness in the future.

At its core foundation, counterfactual explanations solve an optimization problem that tries to find a new version of our friend's profile (let's call it x') that is as close as possible to their current profile ($x$) but gives us the outcome we want (contrary to the one we have).

Mathematically, we're solving:

Where:

is the distance between the original and modified profiles. Think of this as measuring how much we need to change things. The smaller this number, the more realistic our suggestion becomes.

is the prediction output of our model. We aim to keep this below our target threshold.

creates a penalty that gets bigger (remember that we want to minimize overall) when we're hovering above our target. If the predicted score is already below the target, this becomes zero.

is our regularization parameter that controls the trade-off. Turn it up, and we prioritize hitting the target over making small changes. Turn it down, and we prioritize small changes over hitting the exact target.

The beauty of this mathematical formulation lies in its ability to balance two competing goals: making minimal changes (so the advice is practical) and achieving the desired outcome (so the advice is useful).

The last nuance is that we need to pay special attention to the different types of features we have. Some features about our friends are numbers (like "employed for 8 months"), while others are yes/no (like "always paid back loans"). Our optimization needs to handle both continuous and categorical features.

def find_minimal_changes(friend_profile, target_threshold=40):

"""

Finds the smallest changes needed to get a different outcome.

Much simpler than mathematical optimization but demonstrates the core concept.

"""

from friend_risk_ml import FriendRiskPredictor

predictor = FriendRiskPredictor()

current_score = predictor.predict(friend_profile)

if current_score <= target_threshold:

return f"Current risk score {current_score:.1f} is already acceptable"

suggestions = []

# Try increasing years known (time-based improvement)

test_profile = friend_profile.copy()

for extra_years in range(1, 4):

test_profile['years_known'] = friend_profile['years_known'] + extra_years

if predictor.predict(make_full_profile(test_profile)) <= target_threshold:

suggestions.append(f"Wait {extra_years} more year(s) to build trust")

break

# Try reducing loan amount (immediate option)

test_profile = friend_profile.copy()

for reduction in [50, 100, 200, 300]:

new_amount = max(50, friend_profile['amount_requested'] - reduction)

test_profile['amount_requested'] = new_amount

if predictor.predict(make_full_profile(test_profile)) <= target_threshold:

suggestions.append(f"Reduce loan amount by ${reduction} (to ${new_amount})")

break

# Try increasing job stability

test_profile = friend_profile.copy()

for extra_months in [3, 6, 12]:

test_profile['current_job_months'] = friend_profile['current_job_months'] + extra_months

if predictor.predict(make_full_profile(test_profile)) <= target_threshold:

suggestions.append(f"Wait {extra_months} months for job stability to improve")

break

# Check if perfect repayment history would help

if not friend_profile['always_paid_back']:

test_profile = friend_profile.copy()

test_profile['always_paid_back'] = True

if predictor.predict(make_full_profile(test_profile)) <= target_threshold:

suggestions.append("Establish a perfect repayment history first")

return {

'current_score': current_score,

'target_threshold': target_threshold,

'actionable_suggestions': suggestions[:2], # Top 2 most practical

'explanation': f"Current risk score: {current_score:.1f}. Here's what could help:"

}

# Example usage:

# risky_friend = {

# 'years_known': 1, 'times_borrowed_before': 3, 'always_paid_back': False,

# 'current_job_months': 2, 'amount_requested': 500

# }

# suggestions = find_minimal_changes(risky_friend)

# print(f"Current score: {suggestions['current_score']}")

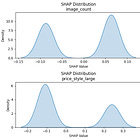

# print("Suggestions:", suggestions['actionable_suggestions'])SHAP (SHapley Additive exPlanations)

Another post hoc local explanation we can add is SHAP values, which answer the question: "How much did each factor contribute to this specific decision?" Unlike our earlier oversimplified approach, SHAP has a mathematically rigorous method for ensuring fair attribution, which stems from game theory, specifically the concept of Shapley Value.

Our earlier "substitute one feature at a time" approach with counterfactuals had a fatal flaw: it ignored interactions between features. Perhaps "employed for 2 years" seems unfavorable in isolation, but combined with "perfect payment history," it might be acceptable. SHAP values consider all possible interactions, giving us the most accurate attribution possible.

We wrote a detailed article on using SHAP on A/B Testing scenarios. Check it out:

The explainability-performance trade-off

The relationship between model performance and explainability typically follows predictable patterns:

How much explainability is enough?

The goal isn't to maximize explainability for all use cases, but to use appropriate explainability for the specific context and requirements. This raises a natural question: how do we determine what is appropriate?

The answer to this question will largely depend on the industry and jurisdiction in which you’re operating. For example, healthcare, financial lending, and AV safety would likely require a high degree of explainability, whereas marketing and entertainment might suffice with good enough or even none at all. Jurisdiction largely matters when it comes to the regulatory requirement profile. For example, the GDPR right to explanation, or the Fair Credit Reporting Act, essentially ban completely black box models, but aren't very strict on explainability methods. At the same time, healthcare in many jurisdictions implies explanations with high fidelity and faithfulness. In 2021, Health Canada, the FDA, and the UK’s MHRA identified 10 guiding principles for good machine learning practice (GMLP). Notably, two principles pertain to explainability. First, "**Users Are Provided Clear, Essential Information**", which includes exposing interpretations. Second, a more critical driver, "**Focus Is Placed on the Performance of the Human-AI Team**", emphasizes the Human-AI team's performance over just the model's performance isolation". This means that the model’s performance is less important than the operator's ability to interpret the results and take appropriate action. In turn, this means that the combined performance of the model (e.g., precision) and the quality of explanations (e.g., SHAP values) are more important than performance alone.

Mapping to real-world models

Let's connect our friend risk predictor concepts to real machine learning algorithms.

White box models with high interpretability

Linear Regression:

Interpretability: Perfect - each coefficient shows exact impact

Explainability: Great - "Each additional year of friendship reduces risk by 2 points"

When to use: Regulated environments, need for audit trails

Decision Trees:

Interpretability: High - can follow decision path

Explainability: High - can explain exact reasoning chain

When to use: Need human-readable business rules

Gray box models with medium interpretability

Random Forest:

Interpretability: Medium - can see feature importance but not individual predictions

Explainability: Medium - can explain overall patterns, harder for specific cases

Post-hoc methods: Feature importance, partial dependence plots work well

Gradient Boosting:

Interpretability: Medium-Low - complex interactions between weak learners

Explainability: Requires post-hoc methods like SHAP

When to use: High performance needed with some explainability

Black box models with low interpretability

Deep Neural Networks:

Interpretability: Very Low - millions of parameters, complex interactions

Explainability: Requires post-hoc methods

Post-hoc methods: SHAP, LIME, attention mechanisms, saliency maps

Ensemble Methods:

Interpretability: Very Low - combining multiple different algorithms

Explainability: Model-agnostic methods like SHAP work best

Model-specific explainability techniques

For tree-based models

Built-in feature importance: Measures how much each feature reduces impurity

Tree visualization: Can literally draw the decision process

Rule extraction: Convert tree paths into if-then rules

For neural networks

Attention mechanisms: Show which parts of the input the model focuses on

Layer-wise relevance propagation: Traces predictions back through network layers

Gradient-based methods: Show which input changes would most affect the output

For ensemble methods

Model-agnostic approaches: SHAP, LIME work regardless of the underlying algorithm

Consensus explanations: Aggregate explanations from individual models

Disagreement analysis: Identify when different models give conflicting explanations

Quick reference for choosing an XAI approach

Do we need to explain to regulators or auditors?

Use: White box model + documentation.

Why: High faithfulness, audit trail.

Does the business want general feature insights?

Use: Gray box + feature importance.

Why: Balance of performance and interpretability.

Users ask, “Why was I rejected?"

Use: SHAP values + counterfactuals.

Why: Individual explanations + actionable advice.

Need simple business rules?

Use: Decision trees or linear models.

Why: Inherently interpretable.

High-stakes decisions (medical, legal)?

Use: White box or extensive post-hoc explanations.

Why: Transparency for critical outcomes.

Performance is paramount?

Use: Black box + post-hoc explanations.

Why: Achieves best accuracy with an explanation layer.

Bringing it all together: the XAI spectrum

The explainable AI landscape can be visualized as a spectrum with multiple dimensions:

Interpretability Spectrum: Inherent → Requires Tools → Opaque

Explainability Spectrum: Self-Evident → Post-hoc Possible → Unexplainable

Fidelity Spectrum: Perfect Match → Good Approximation → Poor Approximation

Faithfulness Spectrum: True Mechanism → Simplified Process → Misleading

Remember: the goal of explainable AI isn't to make every model a white box, but to provide the right level of transparency for each specific context. Sometimes, a simple feature importance chart is enough; sometimes, you need detailed counterfactual scenarios. The key is matching the explanation to the need.

References

https://www.sciencedirect.com/science/article/pii/S0306457324002590

https://arxiv.org/abs/1711.00399

https://www.canada.ca/en/health-canada/services/drugs-health-products/medical-devices/good-machine-learning-practice-medical-device-development.html

https://www.canada.ca/en/health-canada/services/drugs-health-products/medical-devices/transparency-machine-learning-guiding-principles.html